“The artificial is always innocent”- Frank O’Hara

That quote is purposefully out of place on this a data science and learning blog. For O’Hara art which is always artificial being a product of tools makes it purely intent such it does not have to be morale or have purpose. It was a quote I wanted to contrast with our concept of artificial intelligence of which is so often solely measured in utilitarian measures but clearly artificial. I think we have all seen deployments of AI that are not always innocent and it is worth considering the interplay between the both.

I am trying to build a brain emulator which is either going to be highly utilitarian or a bit of an art project.

A Bit Of A Tangent As A Intro

I got floored recently from a talk from “Blaise Aguera – What if intelligence Didn’t Evolve? It Was there From the Start!- Blaise Aguera y Arcas”; found on You Tube. I had been quietly working through my big evolutionary algorithm for trying to get my Hodgkin and Huxley model of a human neurone working inside an artificial neural network such that you had a AI that was highly similar to the human brain running.

I had this whole hypothesis that the current transformer design for the GPT is a dead end that the fundamental issue is that it has no concept oftime and something else has to be developed and such a thing would need to be closer to how we work both because that is how you get a AI with a sense of time but also because end to end the current transformer has parts that you would struggle to represent as a genetic profile to evolve and do different tests.

Blaise Aguera talk really hit me and made me want to finish hello world and my project Elihu (name I chose for the AI).

Gellatian phase transition is what really stood out to me with Blaise Aguera work and how he explained a theory of artificial life could evolve and how complexity would emerge from simpler prior stages and how this could result in something more complex. He talks about this as Symnbiogenesis for forming of better versions when certain mathematically predictable points are met in evolutionary systems and the minimum requirements for even relatively high complexity is not that high and does not need random mutation (which is why he would say intelligence did not evolve); being more intelligent is just more resistant to entropy.

I really liked the proof by Blaise that a system with zero randomness could create complexity is really interesting.

I have been putting this into my attempt to build a brain emulation. What I decided was reading the current literature from neuroscientists I felt the Hodgkin and Huxley differential equations where sufficient to explain the spiking neurone you get in the human brain so I used that as a basis for the code and then looked at how that would learn and found that LTP or long term potentiation is not especially well defined to something could copy and use in a AI brain emulation.

Therefore I started building a genetic algorithm to backwards engineer a system for long term potentiation for a AI using the Hodgkin and Huxley formulas I already had.

I then built a neurogenesis and neuroplasticity system that means the neurones behaved like cells they reach out to each other and connect or disconnect from each other based on a set of rules.

Its got me to set to with setting up the A life (Artificial life) code properly. This is a combination of a database system the Hodgkin and Huxley description of a human neurone a task (reading a book), a genetic system representing the chemical model and changes in chemical concentration within the cells and evolutionary methods as well as modularising the whole thing to try also swapping out different functions. I think this gives the capability to change virtually everything as everything can be expressed as a parameter and treated as a chemical concentration or a code function and tested and bench marked against each other.

I really like a sci-fi setting called Orions Arm that has different levels of singularity levels for Artificial Super Intelligence and the Gelatian phase transitions and Symnbiogenesis really is one way that I think that AI might be like a series of smaller singularities or step changes that outperforms one another. I think the A life design allows me to both run tests on parameters and do purely A and B tests between different functions. Therefore my plan is just keep testing till something does something like symnbiogenesis where one model evolves one part thats 10% closer to better then crosses the right parts with another 10% better and that probably is not just 20% better..

I think a AI that would outperform the current Transformer AI architecture would need to account for time and memory and therefore I think it needs to be closer to differential equations to represent states and systems evolution in time. Therefore while I think you could not model something at a lower level like this Orion Project multi singularity idea if you could get a AI that has trainable differential equations then maybe you could make a step towards that.

I think you could not evolve to that point using matrix calculation and learn those differential equations to build a brain emulator using linear algebra because I think the exploding and vanishing gradient would stop that. i.e. if you just used gradient descent and created a AI with recurrent connections to itself back in time that does not create memory because the feed back loop across those recurrent loops causes instability in the rest of the networks weights.

I think this is kind of obvious when you think of it in that a traditional neural network using gradient descent is an energy model but all these recurrent links are adding more and more time based connections and yet time has entropy that smooths out energy but the neural networks being used therefore could not model for that entropy as they (ANN) are now.

A philosophical question is whether that proper timing can be “learned” at all or whether they must be pre evolved and backed into the model from the beginning. i.e. I would guess entropy needs to be properly modelled in the AI, to model time properly this presupposes differential equations that transition between states but the timing of that system cannot fire and then take forever to recover such that the signal previously cycling a a memory dies nor that the neurone is always on. Therefore that feels like a good question to ask if that can be “learned” at all or must already pe-evolved.

I really mean that because if you think of our brain it looks to have processes like a ANN, its neuroplasticity means it forms a graph like a decision tree and its chemical concentration we best represent with differential equations.

If the timing needs to be evolved or pre programmed to best fit the processes its working with then how could there be AGI or ASI period because each task might require different differential equations. I am just saying which side of that you come down on really does change your thinking on a range of subjects.

You combine those ideas well if you could not get to AGI or ASI through a linear algebra; therefore you need to do something that creates something akin to our neurones simulate their chemical makeup because the fluctuation in the cells chemical makeup would then be like that entropy and be a constraint on the timing and changes over time; and thereby resist exploding or vanishing gradient problems. Thereafter the chemical model that you would need to develop to model that is itself something that can be subjected to continuous evolution to be both fine tuned for purpose of general intelligence.

Simples…If not it will be a cool art piece.

A trainable digital spike neurone networks

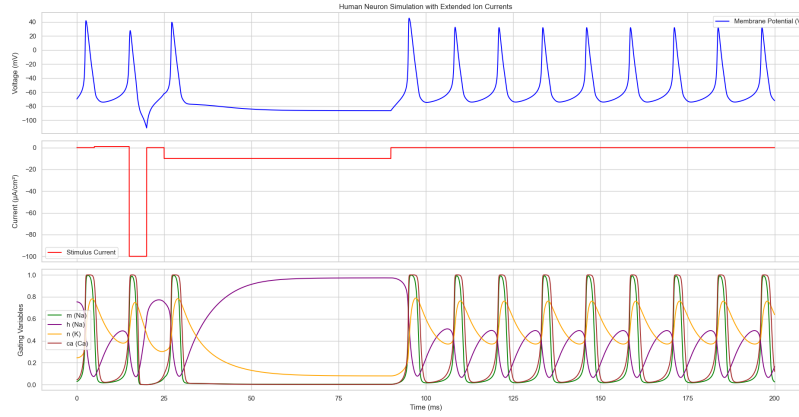

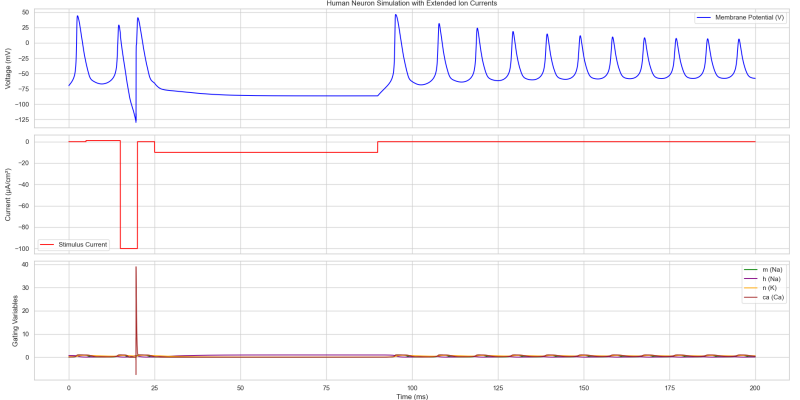

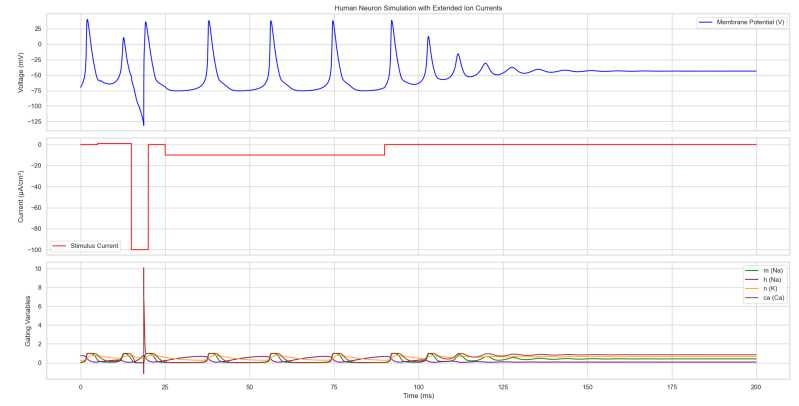

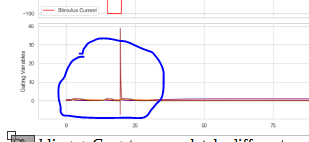

To show you what I mean I will show you graphs of 3 different neurones which identical inputs but changes with the chemical concentration then change the behaviour of the cell.

The Hodgkin Huxley uses parameters to represent teh concentration of certain chemichals within the neurone. It felt to me it was something obvious that a cell being a bag of chemichals that cold likely be changed in the body and this would allow forms of long term potentiation (or its reverse) such that the neurone then fires more or less when another neuorone fires and this would work very simmilarly to weights in neural networks (the linear algebra kind).

Halving lowered g_K the spikes are a bit higher. But the whole wave is the same. Therefore you have evidence that increasing one of the chemichals g_K is likely to regulate actual voltage and this would or could function like a weight in a linear algebra artificial neural network.

Doubling g_Ca gets a completely different wave. Therefore its likely that some of the other neural transmitters might radically change the behaviours of neurones. Some of the complexity is going to be that theres alot of different chemichals and the combination of changes that could take place seems quite large. But lets say we could get that so ts very controlled then that could work like tuning in a radio frequency the neurone could use g_K increase the equivilient weights in linear algebra but also fine tuning something reprsenting the timing and frequency of its waves making it so its timming starts to match the external world.

If you could imagine that you knew exactly how to change those chemicals in the right way then that wave could be “tuned” to the training process.

Therefore if we got the correct chemical model that controlled how you fine tuned that wave and its response. With a AI built on the basis of that the AI should be able to learn live, its responses would be wave like and that would hopefully entail powerful forms of memory.

I built a chemical model that treated each of those chemicals like a pressure system inside the cell which would control such that when one chemical was too high it would disrupt other flows.

But not knowing precisely what would work and when and where to change these chemichals I think you just need to start looking at using something like evolution (im using a geetic algorithm) to backwards engineer whatever all those changes in the chemichal sack of a neurone might be.

To avoid sounding like its all blue sky

When testing creating different change rates in the chemicals I can show different chemical models do create different outcomes.

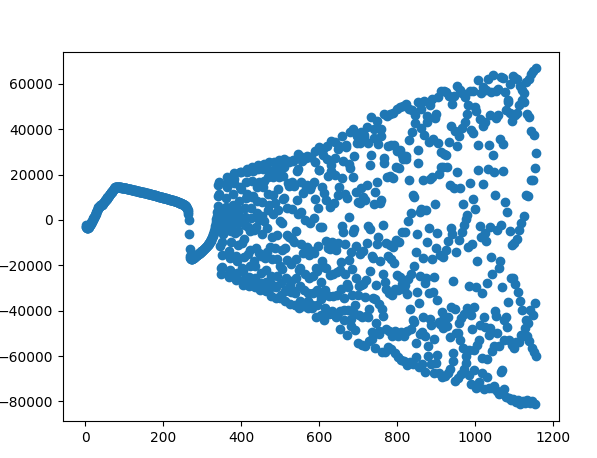

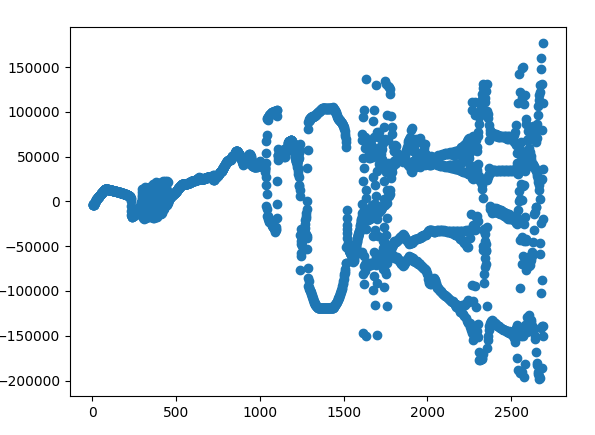

The voltage output when packaged a neural network. It spikes up and down circling a central zero point. The scale increases in time as more neurones are automatically being added as time so the ammount of voltage does increase. Though because it cycles in a stable form around zero it works because it does not push too far up or down on a individual neurone it remain stable and because it will cycle back into a negative voltage it also remains stable.

This does change if changes are made to the chemical model. The output clusters in wholly different ways. This I present as evidence that small changes in the chemichals of a neurone can then change the whole models behaviour.

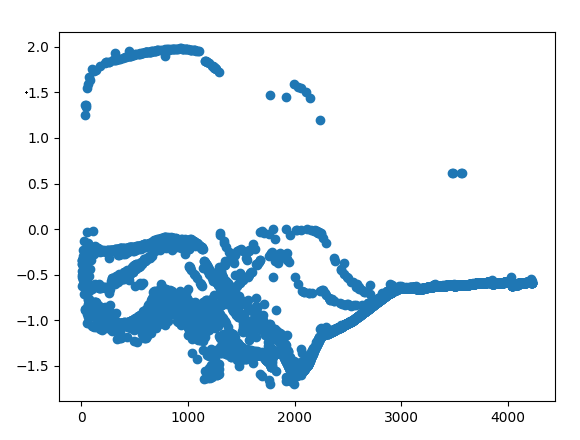

This also affects the performance of the AI. This is a value of the AI performance between -1 and 2 with anything above 0 being a successful prediction of the next letter.

Though with the wrong chemichal setup the performance of the AI dies off.

I think AI needs a chemical model-and, I really hate the word conscious

I feel that its missed that conscious is really just a synonym of conscientious and civic virtue. Go back to Rome it would be considered obvious only a small group of Roman citizens where really conscious and living the heroic life filled with civic virtue.

he thing is I think to make better AI you need to embed time inside the AI. If you do that then and do it successfully surely it starts to look “conscious”. I have a view that any compute that takes place in time would have unfolding events and that would be similar to a notion of Qualia; which is all to say I do not think much of the concept of consciousness. Though there really is a difference between a model like a transformer that has no memory and cannot exist inside of time and when you start to think about a object that has internal processes that exist in time.

Though in all honesty what I am looking to detect is something that in plain speak looks like consciousness. A human brain exists independent of its inputs its processes continue even while asleep and in a sense we have a sense of ourselves existing apart from our sensory input system.

So an AI with the proper timing systems in its differential equations would have the same situation. You unplug it from its interface but keep running it then it would continue to change and exist independent of its inputs and outputs. An AI like this keeps flowing according to its own rimming but a Transformer has been shown able to take a bunch of inputs and learn a output mapping.

Therefore my learning process in the A life algorithm that does this testing needs to look for learning that happens within time and not arising because of over learning. So the design does not allow the AI to retrain on the same piece of text the process is then to pick up where the AI reduces its uncertainty.

I think AI needs a chemical model that constrains its timing processes in ways fit to its purpose.

The AI Functions

This evolutionary process cannot test one part of performance but has to evolve and test all the main behaviours of the neurone and brain emulation towards better performance across multiple types.

Some of the changes are chemical concentrations within the model and others are tests of slight modifications in function processes and has to be defined outside the AI model as separate pieces of code and can be passed in as modular systems to be tested in the main system test.

Uses a growing or neural plasticity system so the model can just spawn more neurones and begin linking them up to the existing network. Built this and doing A and B testing between different ways of doing this “wiring” as an initial process. -Tested as a set of functions using a A and B testing using a Welsh T test to determine which function causes better performances.

Has a neural plasticity system embedded into it so the neurones can disconnect from each other and reconnect with each other. Currently using a process inferred from the blue mind project and relying on calcium concentrations within the networks neurones. -Tested as a set of functions using a A and B testing using a Welsh T test to determine which function causes better performances.

Test main learning function and allow different testing representing different chemical feed back processes. -Tested as a set of functions using a A and B testing using a Welsh T test to determine which function causes better performances.

Test wholly different neurone designs. This is because I made some assumption about how to model the internal pressures of different chemicals inside the neurone and this will let me test against each other-Tested as a set of functions using a A and B testing using a Welsh T test to determine which function causes better performances.

During research I identified 70 chemical concentrations that might need to be modelled for and I have built in to the chemical model for testing. I think this number might be cut down in a final version as the evolution system allows for zeroing certain values which if zeroed it will tell me to just remove that process. Though I tried to approach it as a maximalist design so I could figure out what was not necessary later.

A database system that takes all these tests and builds a record of the test outcomes and individual aberrations.

A Rock Is Not A Agent

The current thinking is anything can operate a policy is an agent. Though if your building a test on speaking then there are sub optimal non intelligent solutions. A book is mostly white space i.e. “ “ or a space bar. If your a “Rock” that just outputs “ “ as a policy then any system you build around testing for intelligence based on a book will actually optimise to build a “Rock”.

I wasted a lot of attempts before concluding a “Rock” is not an agent and deciding you needed a much better test that stops a “Rock” evolving. This is part of the idea that I stole completely from Orion's Arm (Yes a fictional Sci-fi series but at least a hard one) well clearly intelligence has to unfold as a series of singularities and not just a single big one because well maybe the “Rock” dumb but effective response that does not grok the real intelligent right approach changes each time you move up the ladder.

Makes you think but they keep talking about a AI singularity as if its a straight road what happens if its filled with rocks? For this reason I spent along time trying to make sure the automated tests where building would work right.

The A life system went wrong

I think I have done 3 full tests of 1000 AI networks and all I got is “Rocks”. I had to keep upgrading to make sure this became impossible.

-I made it so all testing goes once. It reads one book and tested as it goes it never experiences the same thing over and over. What I am looking for is to pick up something that looks like its learning as its goes and well that would push you towards thinking its conscious. Something I notice about transformers is that they learn really slow and they cannot learn on the fly and rely on extensive training and testing. In looking for my AGI art piece I wanted to measure only learning developed from new experiences.

-Any guesses of “ “ white space is given a score of zero unless another none white space character has been correctly identified. This makes it impossible for a AI to get any score by being the “Rock”.

-The AI uses the Bayesian Turing test. The model is scored down for guessing more common white space characters and gets the best score if it starts predicting sequential characters such that it gets whole words then its scoring will drastically spike.

-Final score is multiplied by a value between 0-1 representing the overall accuracy of the model such that if a model does the same performance then even small improvements will be detected and weighted towards to make sure even if a model becomes a “Rock” the evolution should be able to improve itself.

-Plan to do short tests at first and then extend the time the model is allowed to run for. I am planning to estimate this in time not any measure of compute or CPU cycles. This is because of the neuroplasticity allows a neurone to unhook and reconnect with other neurones and this will reward smaller models that do more with less connections and I feel this is more truer to the way the biological brain works.

-Added a constraint such that if a AI outputs the same value continuously i.e. becomes that “Rock” that just out puts white space “ “ then the score is divided by a count of how many times spammed the same value.

What definitely do not want to evolve is the connectome of how the different Neurones will connect to each other. I might try to improve the general algorithm of how the network performs and handles connections but I do not want this being able to evolve a “language centre” as I have a hypothesis that the brain is not “micro managed-y” and these language centres are formed

Keeping Score

With all this the scoring mechanism is getting hits and some AI that look to be properly training and performing.

There are already some sporadic where it gets the next letter and the letter after that.

Therefore while this is one of my more outlandish projects in principal it does already work as a art piece on how the brain works I just cannot claim its a contender for a “practical use case”. That being said these are all very small test models the fact that they learn anything at all suggests that scaled up there probably would manage similar tasks and the fact that designed them as modular “neurones” and not The issue though if I got to that point and this was the intent on building

You know that thing in terminator where Sky net just co ops everyone's PCs wipes them and install itself. Well in the AI model I am describing we model at the neurone level; there's nothing stopping you making those connections to another neurone in the chain over a Wi-Fi router. It would take work to just figure that out but I guess I am saying the idea that the size of it would be a limiting factor I think is false.

Latency would still be in milliseconds so still fast/faster than the brain.

You want a trillion neurones big enough to house a full consciousness; you ave a data centre you say…. Just saying its more feasible than you might think.

Genetic Algorithms Is A Family Of Algorithms

I did my research on the genetic algorithms in use to try and get the chemical model right the genetic algorithm needs to deal with continuous variables and not binary values (genetic algorithms can usually handle both).

So also built in data analysing components for types of genetic algorithm. I have mainly focused on an artificial annealing process that nudges the area closer, wholly random ones, a series of 10 evolutionary “islands” that partition the models into a few hot house environments and only allow small migrations between them.

I think this will be a point where I need to go through that and identify what works but its the same idea that if I build a stable test and make every part modular I can pull out

Initial analysis shows that there is some maths Blaise Aguera talked about it in his lecture I talked about in the introduction. Therefore I ended up building families of them and I need to bulk out the data but I am quietly sure that the genetic algorithm that you use to evolve the agent can also beat simple fail point but I also know at least one of them does converge because of the clever ways it created the aforementioned “Rocks” but also saw a few actual improvements before the “Rocks” out competed them in the tests.

Therefore I think in 6 months I will know what genetic algorithms drive improvements in the model. 6 months after that I should be around generation 4 or 5 and that is probably long enough to stabilise but not optimise the chemical model we can tests a MVP version of the brain emulation and I can partner with my electrician friend to start trialling . I have tried already using EEG data and probably will try that first.

Closing Thoughts

This project ended up being a series of car crashes I kept finding a new “Rock” or a new way the AI could cheat the system and get an artificially high score al the while being as dumb as a box of…

There was lots of mistakes I made along the way. There’s so many “Rocks” or things that rewarded the wrong behaviour and a lot of the time was rebuilding the model design to make it far more modular that could allow tests on those new functions which might cause “Rock” like behaviour.

The issues is one of telemetry and being able to fool proof the statistics so the heuristic it is testing on is more like real intelligence and speed of learning and less like an incomplete heuristic. Counting right answers, accuracy is all in some ways not a full heuristic. Building a system that is modular but extrudes checks and balances into all the functions of the system was the key issue.

Therefore built this process to make it as close to human ways of thinking. The base is a chemical model for a human neurone and you can see above its a spiking neurone simulation.

If you get something at the end that works well I have lined up an electrician we are going to try and get a EEG machine wired up then live human brain waves written over onto one of these simulations just to try it out.

The issue in my mind is a lot of the really cool theoretical transhumanist ideas you could talk about are limited by most AI models such as transformers behind GPT models have to continuously and repeatedly be exposed to the same input output processes and could not have data streamed into it live.

Many forms of data in the real world are continuous streams of data. Something like trying to copy over a human brain whether partial or in full would not be possible because if you took samples of brain activity by the time you had done multiple passes of training on the AI to embed the learning well the individual brain has moved on.

Logically all the cool transhumanist ideas only work if the AI is already capable as learning as fast as a human brain. At that point the AI model can adapt to interface with the individual. Which is why I am focusing on how much can the AI learn from streamed data rather than allowing it repeat training sessions.

If the chemical model is right well then hopefully that handles time properly then hopefully you can close that gap and make something that is quick enough to start learning live. Then you could stream brain waves from a device into a AI and the model can try to backwards engineer that stream.

This can be my really odd experiment now that its complete and tested I can just leave it running and work on the building of a full Transformer. My hope is learn a bit of both the modular nature of this is that something I discover while building that can be popped into the evolutionary algorithm few generations later if it is performative then great I can go with that.

I think there's also reasons to think such a model would be highly performative. We know the human brain is still more powerful and uses less energy than GPUs or CPUs but if the method it learns does rely on changing chemical concentrations in cells the limiting factors would be both access to those chemicals from nutrients and energy used in ATP to pump ions around to change the chemical concentrations. You would not have those constraints once digitised you can just spawn and teleport in and out the right chemical mixtures if you know the right maths to precisely manage the chemical models for that cell to improve its performance.

Countering this is probably any speed up increases risk that the outcome generates explosive or strange results. i.e. one of the graphs above has an explosion in calcium within the cells simulation.

That explosion in chemical concentration would need to be constrained by feedback loops in other chemicals which is what I have tried to do. But I think you can see from that one shot how “finniky” it might be. Your brain doesn’t release 40 times the human concentration when a neurone fires, this one does...is that good… I dunno...especially because I specifically do not know how that interacts with other neurones in the network... but we saw above its changed the characteristics of the waves that it produced… That is why I think you need to test it this way because you cannot predict what would work from initial axioms or measurements of brain behaviours.

AI is currently being expressed as a big brain maths problem that someone will grok once and thereafter sufficiently smarter brains will continuously improve itself. Our own evolution is data in our DNA kept fine tuning a model of chemicals, and this lots of parallel failed extinct species. Our own minds are not a singularity or the creation of preceding smart but slightly dumber brain; our intelligence comes out of a pile of corpses of often dumb animals and then quite surprisingly we get us; one actual smart one. I’m just saying maybe mother nature is smarter than me and you and maybe if we want ASI or AGI give it something like genetics and give it space to evolve.

If we got that sort of AI built it would be a model just a bit more resistant to entropy than what came before it.

All this assumes all of this works; it might not but I think its fun to try. If it fails well the Artificial is always innocent...

Add comment

Comments